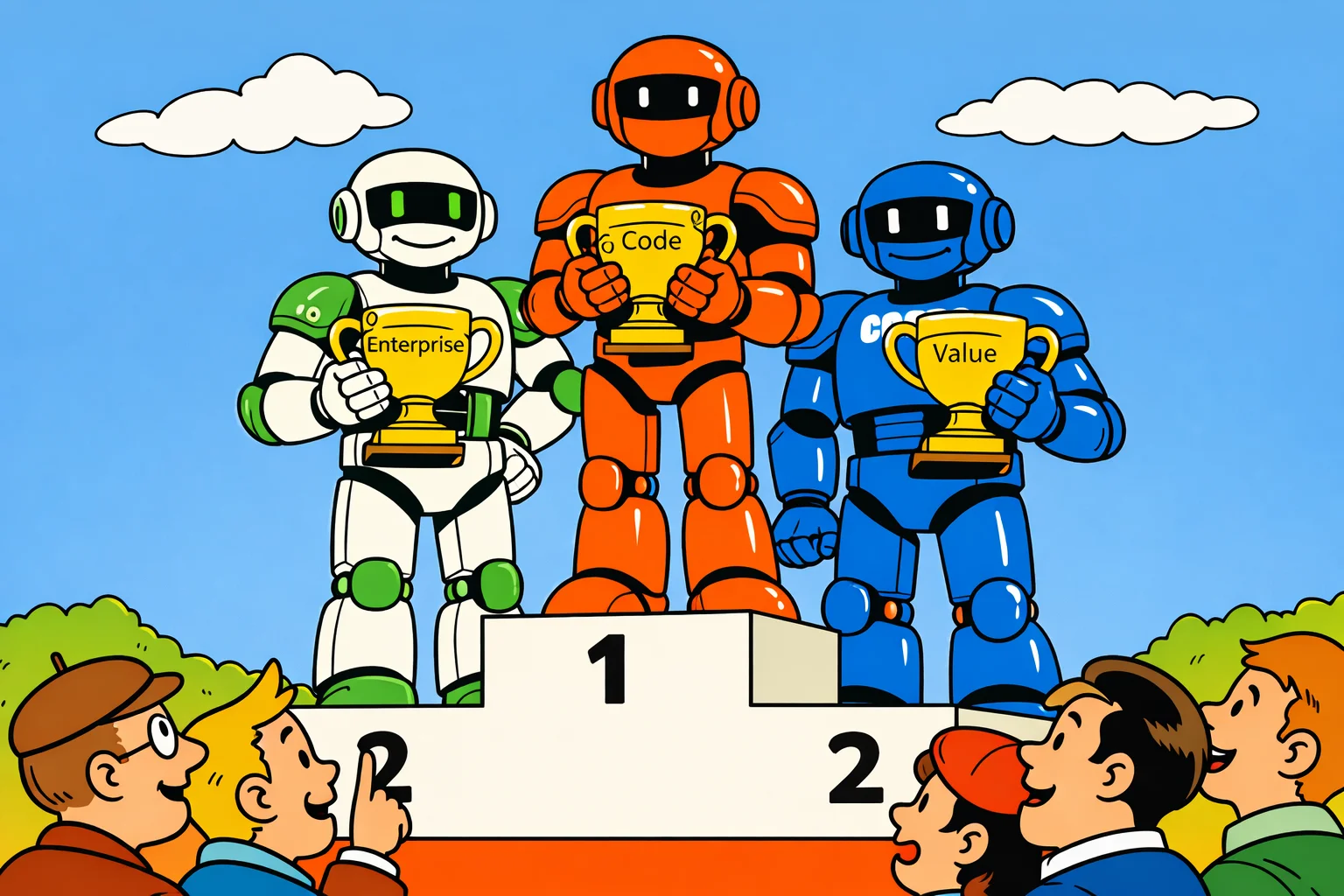

The AI benchmark race is more competitive than ever in March 2026 — with over 255 new model releases in Q1 alone. OpenAI's GPT-5.4 leads in enterprise deployments and computer-use tasks, consistently scoring highest on agentic benchmarks. Anthropic's Claude Opus 4.6 takes the top spot in coding and long-context reasoning, outperforming rivals on SWE-Bench and multi-step problem solving. Google's Gemini 3.1 Pro dominates multimodal tasks and offers the strongest price-performance ratio for high-volume API users. The race is no longer about a single winner: different models now lead in different categories, and the smartest teams are using all three depending on the task.

BesserAI English Edition

AI

GPT-5.4 vs Claude vs Gemini: Who Wins March 2026?

The AI benchmark war heats up: GPT-5.4, Claude Opus 4.6, and Gemini 3.1 Pro go head-to-head. Here's who leads in March 2026.

German original